Ostrava, 24 March 2026 – Modern film effects, animations, and scientific visualisations increasingly involve digital scenes hundreds of gigabytes in size. For the graphics cards tasked with processing these images, this presents a major challenge – their memory is orders of magnitude smaller. Researchers at IT4Innovations National Supercomputing Center, have developed a method that allows scenes larger than the combined memory of all GPUs in a system to be rendered efficiently. By cleverly combining GPU memory with the system RAM, the approach enables extremely large scenes to be processed without a significant drop in performance.

Although GPU memory capacity grows every year, it still cannot keep pace with the demands of modern production. Whether in film effects, animation, or scientific visualisation, complex digital environments often contain hundreds of millions of geometric elements and thousands of textures. For example, Disney’s well-known Moana test scene spans nearly 170 gigabytes of data. When such a scene exceeds GPU memory, rendering becomes severely limited or highly inefficient.

The IT4Innovations team addresses this challenge with so-called out-of-core rendering. The principle is simple: the most frequently used parts of a scene remain in the fast GPU memory, while less frequently accessed portions are offloaded to system RAM. The algorithm runs short test computations to determine which parts of the scene are frequently accessed, keeping these in GPU memory while shifting the rest to system RAM. This minimises slow data access and keeps the computation efficient.

“Our goal was to develop a system that allows GPUs to handle scenes far larger than their own memory,” says Milan Jaroš of IT4Innovations. “Results show that even smaller systems with just a few GPUs can now process massive scenes that previously required much larger computing setups.”

The method leverages NVIDIA Unified Memory, providing a unified address space across both the CPU and all GPUs. Combined with statistical analysis of data access patterns, the algorithm dynamically decides where each part of the scene should be offloaded, minimising costly data transfers across the system. The approach is compatible with high-speed interconnects between GPUs, such as NVLink, enabling efficient data transfer between processing units.

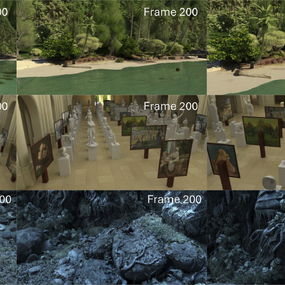

The researchers have also developed a strategy for rendering animations. The system can combine data access statistics from various camera positions, optimising memory use across an entire sequence of frames. This eliminates the need for costly per-frame analyses.

The efficiency of the new method has been tested on several computing systems, including the Karolina and Barbora supercomputers and the NVIDIA DGX-2 system at IT4Innovations. Trials with extremely demanding scenes exceeding 100 gigabytes demonstrated that the approach works across different hardware architectures, efficiently utilising both modern high-speed GPU interconnects and standard communication buses.

Importantly, the solution does not require any major changes to the rendering engine. It can be integrated into popular open-source software such as Blender Cycles, opening new possibilities not only for large production studios but also for smaller creators, research teams, and scientific institutions working with very large 3D datasets.

This new approach, published in the Future Generation Computer Systems journal, demonstrates that even smaller multi-GPU systems can handle massive scenes effectively. Intelligent data management between GPU memory and system RAM represents a significant step towards making high-quality rendering more accessible across a wide range of applications – from film production to scientific visualisation.